Last week, yet another high honor was conferred upon noted scientist Avi Wigderson.

Avi Wigderson is an Israeli mathematician and computer scientist. He is the Herbert H. Maass Professor in the school of mathematics at the Institute for Advanced Study in Princeton, New Jersey, United States of America. His research interests include complexity theory, parallel algorithms, graph theory, cryptography, distributed computing, and neural networks. Wigderson received the Abel Prize in 2021 for his work in theoretical computer science. He also received the 2023 Turing Award for his contributions to the understanding of randomness in the theory of computation.

The photo above shows the cover of the free online (PDF) book that Professor Wigderson has kindly published. Mathematics and Computation provides a broad, conceptual overview of computational complexity theory―the mathematical study of efficient computation. With important practical applications to computer science and industry, computational complexity theory has evolved into a highly interdisciplinary field, with strong links to most mathematical areas and to a growing number of scientific endeavors.

Math is really, really hard for AI models. Complex math, such as geometry, requires sophisticated reasoning skills, and many AI researchers believe that the ability to crack it could herald more powerful and intelligent systems. This post looks at the possibility of Computers being able to reason and discover mathematical insights from a philosophical viewpoint and ponders on the job robbing capacity of AI as being witnessed now (2024) and is expected to be a full blown phenomenon within next 10 years.

This post asks the central and pertinent question:

Do We understand much?

There is a huge hue and cry that:

AI is taking away jobs by millions

If not now - the process has already started - soon this will be the case.

The philosophical basis for the widespread protest is:

AI does not understand anything

The most important question in 21st-century economics may well be:

What should we do with all the superfluous people, once we have highly intelligent non-conscious algorithms that can do almost everything better than humans?

This is not an entirely new question. People have long feared that mechanization might cause mass unemployment. This never happened, because as old professions became obsolete, new professions evolved, and there was always something humans could do better than machines. Yet this is not a law of nature, and nothing guarantees it will continue to be like that in the future. The idea that humans will always have a unique ability beyond the reach of non-conscious algorithms is just wishful thinking. The current scientific answer to this pipe dream can be summarized in three simple principles:

1. Organisms are algorithms. Every animal — including Homo sapiens — is an assemblage of organic algorithms shaped by natural selection over millions of years of evolution.

2. Algorithmic calculations are not affected by the materials from which the calculator is built. Whether an abacus is made of wood, iron or plastic, two beads plus two beads equals four beads.

3. Hence, there is no reason to think that organic algorithms can do things that non-organic algorithms will never be able to replicate or surpass. As long as the calculations remain valid, what does it matter whether the algorithms are manifested in carbon or silicon?

One of the broadest ways to informally define computation — indeed, the view that underpins the celebrated Church-Turing thesis, is as follows.

Computation is the evolution process of some environment by a sequence of “simple, local” steps

If this definition seems to you as capturing practically any natural process you know of, that’s precisely how I want it to sound! Of course, this definition calls for a specification of the evolving environment and a notion of granularity that will distinguish local and global, simple and complex. The most basic setting (from which the word computation originally arises), is when bits evolve in a Turing machine or in a Boolean circuit; this is one concrete example of the granularity notion, and the rules/steps of evolution. Another, still an actual computation but with completely different granularity and basic steps, arises in the evolution of the states of processors in a network, under (say) pairwise communication. And there are of course many other examples which capture (existing or imagined) computing systems.

The main point I wish to make is that this viewpoint of processes as computation extends to numerous other settings, vastly removed from computers. In each setting, many different choices of granularity, and simple and local rules (which may be given by nature or made up by us), will lead to different evolution processes. Here is a partial list of environments with such interacting parts, which in all cases can shed their physical characteristics and be viewed as transformations of pure information:

Bits in a computer

Computers in a network

Atoms in matter

Neurons in the brain

Proteins in a cell

Cells in a tissue

Bacteria in a Petri dish

Deductions in proof systems

Prices in a market

Individuals in a population

Stars in galaxies

Friends on Facebook

Quantum Bits (Qubits) in entangled states

All of these are playgrounds where theorists of computation5 have a role to play! The computational modeling and quantitative study of the resources expended by all such processes, what they “compute” and other properties of this evolution, is the bread and butter of ToC.

At this point we are informed that:

Computing is very broadly found in nature

Computing may give rise to autonomous intelligence

Let me then ask you:

Do we understand much? In which way are we uniquely different from AI of future?

In the rest of the post I will provide an example which attempts to force thinking on this central question.

Lets take an apparently simple problem from arithmetic:

How do you prove without summing that -

126785 + 672347 = 561236 + 237896

If you do the summing on the both sides of the equation, you can show that:

799132 = 799132

But you cannot do the summing - this constraint is there to understand whether:

You understand much about something as simple as Addition.

At first glance this problem appears insolvable without summing. But let us remember that Mathematics is about patterns. Mathematicians say that mathematics is the study of pattern—of patterns and structure in numbers, and patterns and structure in geometry. Seeing pattern and structure in the world around us is a key mathematical habit of mind and one that children are developing from the first days of life.

So here is one proof in the spirit of patterns:

Notice that 126785 is related to 237896 since each digit has been increased by 1:

237896 = 126785 + 111111 (this fact is self evident without summing)

Also notice that 672347 is related to 561236 since each digit is decreased by 1:

561236 = 672347 - 111111 (this fact is also self evident without subtracting)

So:

561236 + 237896

= (672347 - 111111) + (126785 + 111111)

= 67234 + 126785

Is this equal to 126785 + 67234?

You say: Yes, obviously!

Ask yourself again how do you know that? Can you demonstrate how the Addition operation is Commutative?

* Stating that it is obvious will not count

* Demonstrating with small numbers will not count

For fun, I prompted this arithmetic challenge to Google Gemini:

Prompt > How do you prove without summing that:

126785 + 672347 = 561236 + 237896

Here is the response from the billion dollar Large Language Model (LLM):

Proving the equation 126785 + 672347 = 561236 + 237896 without directly summing the digits involves checking the sum at each place value column.

Let's break down the equation by place value:

Tens of Thousands Place:

1 + 6 = 7. On the right side, 5 + 2 = 7. Both sides have the same sum (7) at the tens of thousands place.Thousands Place:

2 + 7 = 9. On the right side, 6 + 3 = 9. Again, both sides have the same sum (9) at the thousands place.Hundreds Place: 6 + 2 = 8. On the right side, 1 + 7 = 8. Once more, both sides have the same sum (8) at the hundreds place.

Tens Place: 7 + 3 = 10. Here's where it gets interesting. A sum of 10 in a single place column typically indicates a "carry-over" to the next place value. On the right side, we have 2 + 8 = 10, also resulting in a carry-over.

Ones Place: 8 + 7 = 15. On the right side, we have 3 + 6 = 9. This is where the equation breaks down. If the left side truly resulted in the same value as the right side at each place value, the sum of the ones digits should also be the same. Since 15 is not equal to 9, the equation must be false.

Therefore, even without summing all the digits individually, analyzing the place values demonstrates that the equation 126785 + 672347 is not true.

My Analysis

The concluding sentence (Q.E.D) is grammatically and factually incorrect. The sentence did not list the full equation:

126785 + 672347 = 561236 + 237896

It then proclaims the true identity to be false. The place value wise numerical proof pathway is not logical. It will be unfortunate if a budding student relies upon this faulty reasoning.

Lets turn our attention to the question posed earlier:

Is 67234 + 126785 equal to 126785 + 67234?

We feel that we know Arithmetic intimately.

We do not. Otherwise, the book Principia Mathematica would not have devoted more than 300 pages to prove 1 + 1 = 2. Here is the relevant fact from Wikipedia:

Lets try to understand why apparently simple Natural Numbers present infinite complexity. To first appearances, there’s a very simple description: Start with 0, then add 1, then add 1 again, keep doing this forever, and those are the natural numbers. Unfortunately, “keep doing this forever” is a little vague, and the complexity comes in when you try to make that precise.

So if you were setting out to give a complete description of the natural numbers, where would you start? Probably here:

We have a number called zero, and then every number has a successor.

But that description fits a lot of things besides the natural numbers; it also fits, for example, the integers (the integers, unlike the natural numbers, include negatives). Here’s an attempt to fix that:

We have a number called zero, and then every number has a successor, and zero is not the successor of any number.

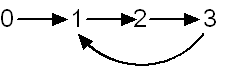

Better, but still no good; this fails to rule out a system where 1 follows 0, 2 follows 1, 3 follows 2, and 1 follows 3, like this:

To fix that, we have to add a clause specifying that no two numbers (such as 0 and 3) have the same successor. But even now, we’ve only just begun.

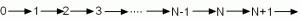

We still haven’t ruled out the possibility of infinite gaps between numbers. For all we can tell from our description so far, the number system might look like this:

with infinitely many numbers in between 3 and that very large number N. How can we rule that out?

This one is not so easy. We’d like to say that all gaps between numbers are finite. But how do we define “finite”? Usually we say a number is finite if it’s part of the set of natural numbers. Or to put this another way: We’d like to say that no matter where you start (say at N), you can’t count backward forever; eventually you’ve got to hit a stopping point. But what does it mean to count backwards forever? It means counting back more than a natural number of steps. There’s that circularity again.

What we really really need, it turns out, is to add a clause like this to our description:

Every non-empty subset of the natural numbers has a smallest element.

This will solve our problem, because it implies, for example, that the set of numbers you can reach by counting backwards from N has a smallest element—eliminating the possibility of that infinite gap.

But this assumption, stated in this way, opens a can of worms that almost nobody wants to open. Here’s why: For the first time, we’ve been forced to talk about sets of natural numbers, as opposed to natural numbers themselves—and even to talk about all those sets at once. In technical jargon, we’ve left the world of first-order logic and entered the world of second-order logic. But that’s a very strange world indeed. In ordinary (first-order) logic, we have a small number of rules of inference that allow us to proceed, for example, from “Socrates is a man” and “All men are mortal” to “Socrates is mortal”. But in second-order logic, not only are the rules of inference not finite; they cannot even be printed out (even in an infinite amount of time) by any computer. That’s why the great logician Willard van Ormand Quine insisted that second order logic is not logic, and why mathematicians usually prefer to avoid it.

From this definition and understanding of Natural Numbers, it is possible to show a mechanical proof that a + b = b + a. This is known as Commutativity of Addition. Finally we arrive at:

67234 + 126785 = 126785 + 67234

You might be quite lost at this length, so let us summarize what all transpired in this article so far:

A simple arithmetic problem was defined to which Google Gemini AI provided an utterly incorrect proof and faulty explanation

The correct solution employed pattern seeing skills among the numbers provided

True understanding of this problem requires deeper understanding on axioms of arithmetic

As of now we can do a lot from high level superficial understanding

Perhaps in near future AI can match and surpass our problem solving capacity based on similar high level superficial understanding

Finally we are ready to tackle the central question:

Why Shouldn't AI Take Away Jobs?

I believe, as a society, we should collectively confront:

How much do we understand and how much does it matter?

I once got caught for forging a signature. Here is what happened:

I received a very poor education in India. Actually billions are receiving very poor education worldwide. In grade 6, one of my teachers had a very peculiar pedagogic method which I violently disagreed with. The teacher used to subject us to a very peculiar kind of homework:

Copy two pages from the textbook to the notebook and get the copy signed by your parents (one of them). Even in grade 6 I was deeply frustrated with this kind of mindless assignment a few times a week. So in frustration I stopped taking the copy to my parents and started signing the copy myself. The teacher recognised the signature from a sixth grader and I got caught.

What is Machine Learning? Is it not similar to copying from a textbook to a notebook and then getting the copy certified by machine parents (the ML trainers). As I said I violently disagreed that this kind of Training can result in Learning.

The argument and thought-experiment now generally known as the Chinese Room Argument was first published in a 1980 article by American philosopher John Searle (1932– ). It has become one of the best-known arguments in recent philosophy. Searle imagines himself alone in a room following a computer program for responding to Chinese characters slipped under the door. Searle understands nothing of Chinese, and yet, by following the program for manipulating symbols and numerals just as a computer does, he sends appropriate strings of Chinese characters back out under the door, and this leads those outside to mistakenly suppose there is a Chinese speaker in the room.

The narrow conclusion of the argument is that programming a digital computer may make it appear to understand language but could not produce real understanding. Hence the “Turing Test” is inadequate. Searle argues that the thought experiment underscores the fact that computers merely use syntactic rules to manipulate symbol strings, but have no understanding of meaning or semantics. The broader conclusion of the argument is that the theory that human minds are computer-like computational or information processing systems is refuted. Instead minds must result from biological processes; computers can at best simulate these biological processes. Thus the argument has large implications for semantics, philosophy of language and mind, theories of consciousness, computer science and cognitive science generally. As a result, there have been many critical replies to the argument.

The productivity benefits of automation have not led to a 15-hour workweek, as predicted by economist John Maynard Keynes in 1930, but instead to "bullshit jobs": "a form of paid employment that is so completely pointless, unnecessary, or pernicious that even the employee cannot justify its existence even though, as part of the conditions of employment, the employee feels obliged to pretend that this is not the case." Many people who are working these bullshit or pointless jobs know that they are working jobs that do not contribute to society in a thoughtful way. A review of the book notes, "Technology has advanced to the point where most of the difficult, labor-intensive jobs can be performed by machines." Instead of producing more jobs that are fulfilling for our environment, they create meaningless jobs to provide everyone with an opportunity to work. While these jobs can offer good compensation and ample free time, the pointlessness of the work grates at their humanity and creates a "profound psychological violence".

More than half of societal work is pointless, both large parts of some jobs and five types of entirely pointless jobs:

Flunkies, who serve to make their superiors feel important, e.g., receptionists, administrative assistants, door attendants, store greeters;

Goons, who act to harm or deceive others on behalf of their employer, or to prevent other goons from doing so, e.g., lobbyists, corporate lawyers, telemarketers, public relations specialists;

Duct tapers, who temporarily fix problems that could be fixed permanently, e.g., programmers repairing shoddy code, airline desk staff who calm passengers with lost luggage;

Box tickers, who create the appearance that something useful is being done when it is not, e.g., survey administrators, in-house magazine journalists, corporate compliance officers;

Taskmasters, who create extra work for those who do not need it, e.g., middle management, leadership professionals.

These jobs are largely in the private sector despite the idea that market competition would root out such inefficiencies.

Work as a source of virtue is a recent idea. In fact, work was disdained by the aristocracy in classical times but inverted as virtuous through then-radical philosophers like John Locke. The Puritan idea of virtue through suffering justified the toil of the working classes as noble. And so, bullshit jobs justify contemporary patterns of living: that the pains of dull work are suitable justification for the ability to fulfill consumer desires, and that fulfilling those desires is indeed the reward for suffering through pointless work. Accordingly, over time, the prosperity extracted from technological advances has been reinvested into industry and consumer growth for its own sake rather than the purchase of additional leisure time from work.[2] Bullshit jobs also serve political ends, in which political parties are more concerned about having jobs than whether the jobs are fulfilling. In addition, populations occupied with busy work have less time to revolt.

A potential solution would be a universal basic income, a livable benefit paid to all, without qualification, which would let people work at their leisure. A natural human work cycle of cramming and slacking is the most productive way to work: even in the modern economy, workers such as farmers, fishers, soldiers, and novelists vary the intensity of their work based on the urgency to produce and the natural cycles of productivity, not arbitrary standard working hours. Time not spent pursuing pointless work could instead be spent pursuing creative activities.

Finally I implore you to watch this hilarious video on Nothing University:

I want to end this post by singing Age Of Aquarius by 5th Dimension:

When the moon is in the Seventh House

And Jupiter aligns with Mars

Then peace will guide the planets

And love will steer the stars

This is the dawning of the Age of Aquarius

The Age of Aquarius

Aquarius! Aquarius!

Harmony and understanding

Sympathy and trust abounding

No more falsehoods or derisions

Golden living dreams of visions

Mystic crystal revelation

And the mind's true liberation

Aquarius! Aquarius!

When the moon is in the Seventh House

And Jupiter aligns with Mars

Then peace will guide the planets

And love will steer the stars

This is the dawning of the Age of Aquarius

The Age of Aquarius

Aquarius! Aquarius!

Let the sun shine, Let the sun shine in

The sun shine in

Let the sun shine, Let the sun shine in

The sun shine in

Let the sun shine, Let the sun shine in

The sun shine in